Last week, Brandon Sanderson posted a video from a conference where he gave a talk titled “The Hidden Cost of AI Art.” In it, he argues that writers who use AI are not true artists, because the act of creating true art is something that changes the artist. This is true even if AI becomes good enough to write books that are technically better than human-written books. Therefore, aspiring authors should not use AI, because it’s not going to turn them into true artists. Journey before destination. You are the art.

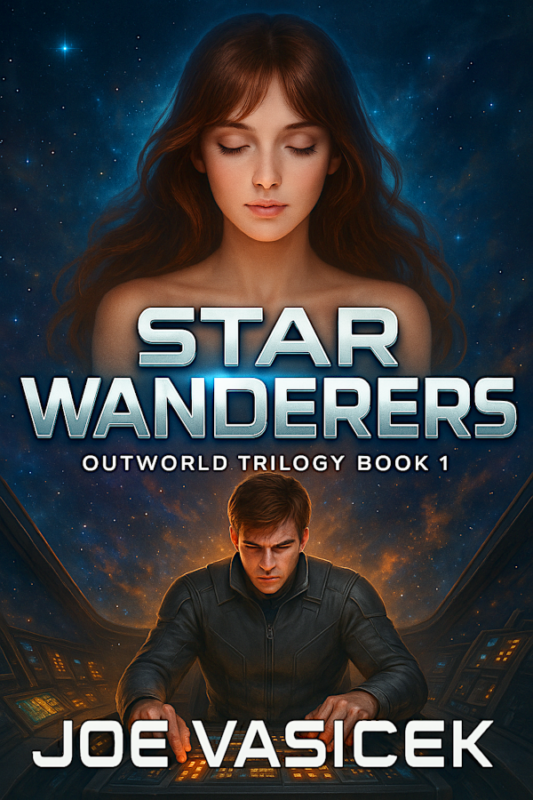

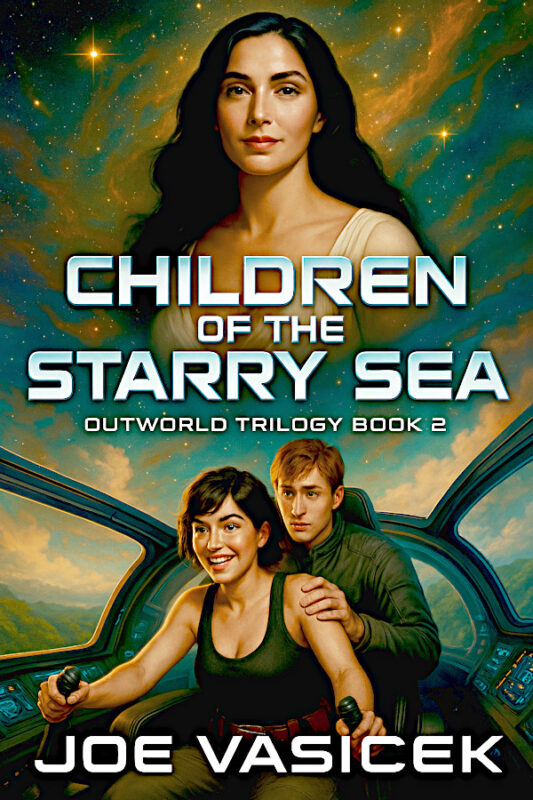

Obviously, I disagree very strongly with Brandon on this point. For the past several years, I’ve been reworking my creative process from the ground up, in an effort to figure out how best to use AI to not only write faster, but to write better books. I’ve experimented with a lot of different things, some of which have worked, most of which haven’t. And I’ve published several AI-assisted books, many of which have a higher star rating than most of my human-written books. So I think it’s safe to say that I have some experience on this subject, at least as much as Brandon himself, if not more.

Brandon compares the rise of generative AI with the story of John Henry and the steam-powered rock drill, where John Henry beat the machine but died from overexertion. So he showed that man can still beat the machine, but the machine still went on to change the world.

But I don’t think that’s the right story when it comes to AI. It’s far too simplistic, pitting the AI against the artist. Instead, I think it’s better to look at how AI has changed the world of chess. For a long time, people thought that a computer would never be able to beat a human at chess. Then, in the 80s, an artificial intelligence dubbed “Deep Blue” beat Garry Kasparov at chess, proving that computers can beat even the best humans at the game. So now, all of our chess tournaments are played by AI, and humans don’t play chess at all. Right?

Of course not. Because here’s the thing: even though a strong AI can always beat a human at chess, a human who uses AI can consistently beat even the strongest AI chess engines. In fact, there are tournaments where teams of humans and AIs play against each other. They aren’t as popular as the human-only tournaments, since we prefer to watch humans play other humans, and the best human chess players prefer to play the game traditionally. But when they train, all of the top grandmasters rely on AI to hone their craft and sharpen their skills.

Chess is a great example of a field that has incorporated AI. And even though AI can play chess better than a human, AI chess players have not and never will replace human chess players. Because ultimately, asking whether humans or AI are better at chess is the wrong way of looking at it. AI is better at some things, and humans are better at other things. The best results happen when humans use AI as a tool, either in training or in actual play. And because of how they’ve incorporated AI, the game of chess is more popular now than ever.

Brandon spends a lot of time angsting about whether AI writing can be considered art. Perhaps when I’m also the #1 writer in my genre, and have amassed enough wealth through my book sales that I never have to work another day in my life, I can also spend my days philosophizing about what is and is not art. But right now, I prefer a more practical approach. I’m much less concerned about what art is than I am about what it does. And the best art, in my opinion, should point us to the good, the true, and the beautiful.

Can AI do that? Can it point us to the good, the true, and the beautiful? Yes, it can, just like a photograph or a video game can—both examples of counterpoints that Brandon brings up. But as with the game of chess, a human + AI can create better art than a pure AI left to its own devices. I suspect this will remain true, even if we reach the point where AI art surpasses pure human-made art. Because at the end of the day, AI is just a tool.

But what about Brandon’s point that “we are the art”? Isn’t it “cheating” to write a book with AI? Doesn’t that demean both the artist and the creative act?

It can, if all you do is ask ChatGPT to write you a fantasy story. Just like duct-taping a banana to a wall and calling it “art” is pretty demeaning (though you’ll still get plenty of armchair philosophers debating about whether or not it counts, highlighting again how useless the question is). But if you spend enough time with AI to really dig into what it can do, you’ll find that it’s no less “cheating” than pointing a camera and pushing a button.

One of the first AI-written fantasy stories I generated was a story about a half-orc. I wrote it using ChatGPT while my wife was in labor with our second child. We were both at the hospital, and I had a lot of down time before the action really began, so I used those few hours to write a 15k word novelette. It was fun, but the story itself was pretty generic, which is why I’ve never published it.

Basically, it read like an average D&D fanfic—which is exactly what every AI-generated fantasy story turns into if you don’t give it the proper constraints. If all you do is ask ChatGPT to tell you a story, it will give you a very average-feeling story. Every fantasy turns into a Tolkien clone or a D&D fanfic. Every science fiction turns into Star Trek. It may be fun, but it’s not very good. Just average.

My first AI novel was The Riches of Xulthar, and I wrote it quite differently. Instead of just running with whatever the AI gave me, I picked and chose what I wanted to keep, discarding the stuff that didn’t work very well. But I still didn’t constrain the AI very much, so it went off in some pretty wild directions, which made it a challenge to decide what was good. As a result, it went in some very different directions than I would have taken it, but the end result was something that I could still feel good about putting my name on. And of course, after generating the AI draft, I rewrote the whole book to make sure it was in my own words. That also helped to smooth out the story and make it my own.

Since writing The Riches of Xulthar, I’ve written (or attempted to write) some two dozen AI written novels and novellas. Most of them are unfinished. Some of them are spectacular failures. I’ve published another half-dozen of them, most in the Sea Mage Cycle.

It was while I was working on the latest Sea Mage Cycle book, Bloodfire Legacy, that I finally felt I was getting a handle on how to write something really great with AI. The key is constraints. AI does best when you give it constraints that are clear and specific. The more you constrain it, the more likely you are to get something that rises above the average and approaches something great.

But to do that, you have to have a very clear and specific idea of what you want your story to look like. Which means you have to have a solid outline (or at least some really solid prewriting), and a deep understanding of story structure.

I think the real reason Brandon is so opposed to AI writing is that it negates his competitive advantage—the thing that has made him the #1 fantasy writer. Without AI, the biggest bottleneck for new and established writers is putting words on a page. Brandon made a name for himself with his ability to write a lot of words relatively quickly. Where other fantasy writers like Martin and Rothfuss have utterly failed to finish what they start, Brandon finishes everything that he starts, and he starts more series than most other writers finish. This is why he’s known as Brandon Sanderson, and not just “the guy who finished Wheel of Time.”

But generative AI removes this bottleneck. Suddenly, putting words on the page is quite easy. They might not be good words, but they might be as good as Brandon Sanderson’s words. After all, his prose isn’t exactly the most brilliant of our time. Deep down, I think Brandon feels this, which is why he sees AI as such a threat.

Will writing with AI make you lose some of your writing skills? Probably. I suspect it’s much like how using AI to code will make you weaker at coding, at least on a line-by-line level. But coding with AI will make you a much better programming architect and designer, since it frees you up to focus on the higher-level stuff.

In a similar way, I expect that the new bottleneck for writing will have to do with the higher level stuff: things like story structure and archetypes. The writers who will stand out in an AI-dominated writing field will be the ones with a deep and intuitive understanding of story structure, who can use that understanding to get the AI to produce something truly great. Because if you understand story structure, you can write better constraints for the AI. Pair that with a good sense of taste, and you’ve got an artist who can make some really great stuff with AI.

This is why I think Brandon’s views on AI art are not only misguided, but actually toxic. Love it or hate it, AI is just a tool. Using it doesn’t make you any less of an artist, just like using a camera vs. using a paintbrush doesn’t make you any less of an artist.