They say that authors should never respond to one-star reviews. That’s generally good advice, and for most of my career, I’ve studiously kept it. However, I’ve recently begun to get a new kind of one-star review that baffles me—reviews that essentially say: “the book was good, but it was written with AI so I hate it.”

Here’s an example:

This book is written with AI. Incredibly disappointing as a reader to give a book/author a chance and then to get to the end of the book only for the “author” to then announce the AI card. If I could give zero stars, I would for this alone. I also didn’t appreciate that this use of AI was not announced until the ending Author’s Note. If “authors” are going to cut corners and put their name to computer-generated mush, they should be willing to put that information on the front cover. The book struggled to find its pace, and some parts read as though they were written for a child’s short story competition while others felt as though the writer was snorting crushed up DVDs of Pirates of the Caribbean as they wrote.

Let’s break it down:

This book is written with AI. Incredibly disappointing as a reader to give a book/author a chance and then to get to the end of the book only for the “author” to then announce the AI card.

Yes… but I can’t help but notice that you got to the end of it. In other words, you finished the book. Also, from the way you tell it, it seems that you didn’t realize the book was written with AI until you got to the very end. So based on your own behavior, it doesn’t seem that quality was the issue.

I also didn’t appreciate that this use of AI was not announced until the ending Author’s Note. If “authors” are going to cut corners and put their name to computer-generated mush, they should be willing to put that information on the front cover.

Okay… but if my book was just “computer-generated mush,” why did you finish it? And why were you surprised when you learned that it was written with AI-assistance?

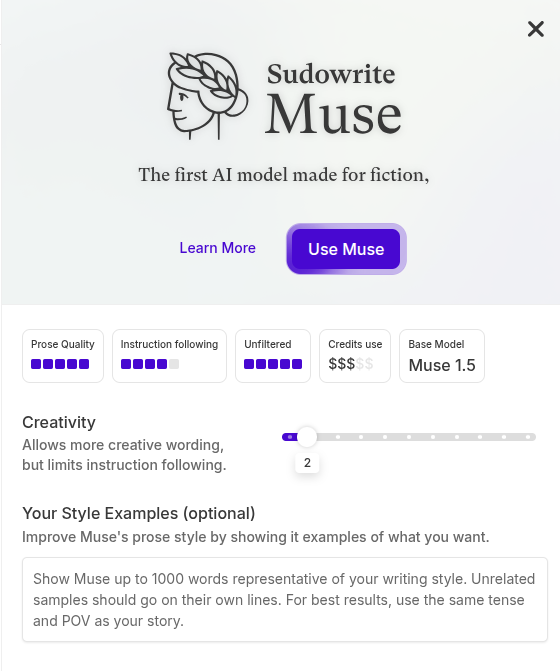

I can understand the objection to books that were written solely with AI, with little to no human input. But that’s not how I write my AI-assisted books. Instead, I outline them thoroughly beforehand, write and refine a series of meticulously detailed prompts (usually using Sudowrite), and generate multiple drafts, combining the best parts of them to make a passable AI draft. And then I rewrite the whole thing in my own words, using the AI draft as a loose guide with no copy-pasting.

Why would I go through so much trouble? Because of how the AI drafting stage gives me a bird’s eye view of the book, allowing me to identify and fix major story issues before they metastasize and give me writer’s block. Before AI, that’s where 80% of my writer’s block came from, and it often derailed my projects for months, so that it took me well over a year to write a full-length novel. But with AI, I’m no longer so focused on the page that I lose sight of the forest for the trees. So even though generating and revising a solid AI draft adds a couple more steps to the process, it’s worth it for the time and trouble that it saves.

That’s the way I use generative AI in my writing process. But there are many other ways—and I hate to break it to you, but most authors use AI in one way or another. If an author uses Grammarly to fix their spelling and grammar, should they disclose that on the cover? If they use MS Word? What if they used a chatbot to brainstorm story ideas, but went on to write it entirely themselves? Should that also be disclosed?

The book struggled to find its pace, and some parts read as though they were written for a child’s short story competition while others felt as though the writer was snorting crushed up DVDs of Pirates of the Caribbean as they wrote.

Yes… but again, I can’t help but notice that you finished the book. And after you finished it, you were surprised to learn that it was written with AI. So with all due respect, I’m going to call BS on your objections here. I think you only decided you hated the book after you learned it was written with AI, and you came up with these objections after the fact. Whatever.

I think a lot of the people who object to AI are really just scared and angry. They claim to have principled, ethical objections to the technology, but few of them follow through to implement that principled stance into every area of their lives. After all, if you use Grammarly, Google Docs, or MS Word, you are using generative AI just as surely as I am using ChatGPT and Sudowrite. For most people, the ethical objections are just a smokescreen for their general fear of change. They’re fine with embracing the convenience the technology offers them in their own personal lives, but they insist that everyone else—including me—live according to their principles, no matter how inconvenient or difficult it may be.

As an example of that, check out this one-star review:

The arts! Whether visual, performance, or literary—my haloed experience has been the act of creating and sharing a connection to the profound or sublime. Why, then, would any artist—musician, dancer, sculptor, painter, or author—offload (abdicate) the act of creation to AI? Process versus product. Mr. Vasicek included an afterword for this volume, describing his workflow and the efficiency of collaboration with AI: a 6,624-word day! another volume completed! Mr. Vasicek obviously owns the skills to weave rich character development and scenes. Perhaps Mr. Vasicek’s AI collaboration explains why these characters, the plot, the narrative—and subsequently, the entire story— are so flat and undeveloped. Although his lead male shows some undeveloped promise, the mother’s too-oft used “dear” and “my love,” and the daughter’s clutching at her mother’s apron are cringe-inducing. Perhaps Mr. Vasicek might eschew AI-assisted writing, seeking a future of quality over quantity.

Let’s break it down:

The arts! Whether visual, performance, or literary—my haloed experience has been the act of creating and sharing a connection to the profound or sublime. Why, then, would any artist—musician, dancer, sculptor, painter, or author—offload (abdicate) the act of creation to AI?

Because for some of us, writing is more than a “haloed experience”—it’s an actual job. It’s what we do for a living. And if you want to do your best work, you need to use the best tools. We used to build houses with plaster and lath and wrought-iron nails, using hand tools and locally-sourced lumber. But today, you’d be a fool not to use power tools and materials sourced from a building supply store, or your local Home Depot. If that makes your building experience less profound or sublime, so be it.

Process versus product. Mr. Vasicek included an afterword for this volume, describing his workflow and the efficiency of collaboration with AI: a 6,624-word day! another volume completed!

I’m not gonna lie: there is a certain degree of tension between art-as-product and art-for-art’s-sake. But the two are not mutually exclusive. A house can still be a beautiful work of art, without taking as long as a cathedral to build it. Likewise, a book can still be a beautiful work of art, without taking as long as Tolkien’s Lord of the Rings.

Again, you’re trying to pidgeon-hole me into your “haloed” idea of what a “true artist” should be. Which would make it absolutely impossible for me to make a living at this craft. If all of us writers followed that path, there are a lot of wonderful books that would never get written. And I doubt that the overall quality of the books that do get written would rise.

Mr. Vasicek obviously owns the skills to weave rich character development and scenes.

Now we get to the interesting part. I checked this reviewer’s history, and this was the only review they’ve written for any of my books. Therefore, I can only assume that this is the only book of mine that they’ve read. But if that’s the case, how do they know that I have “the skills to weave rich character development and scenes”? If the book I wrote with AI was pure trash, why would they say that I obviously have some skill?

Once again, we’ve got a case of “I enjoyed this book, but it’s written with AI so I hate it.” In other words, it’s not the book itself that you hate, so much as the way I wrote it. You object to the idea of authors using AI, not to what they actually write with AI.

Perhaps Mr. Vasicek’s AI collaboration explains why these characters, the plot, the narrative—and subsequently, the entire story— are so flat and undeveloped. Although his lead male shows some undeveloped promise, the mother’s too-oft used “dear” and “my love,” and the daughter’s clutching at her mother’s apron are cringe-inducing.

Finally, some specific and legitimate criticism. And while I do think there’s a degree of retroactively looking for faults after enjoying the book, I’m totally willing to own that these criticisms are valid. This particular book (The Widow’s Child) was one of my first AI-assisted books, and I was still learning to use these AI tools as I was writing it. I did the best I could at the time, but if I were to write it today, I could probably do a lot better, smoothing out the annoying AI-isms that you’ve pointed out here.

But the book is currently sitting at 4.4 stars on Amazon (4.1 on Goodreads). And the other readers do not share your objections. Here is another review, pulled from the same book:

Since waiting a year or more to read the next book in a sequel is hard on my stress levels, I’m liking this AI. It means talented authors like Joe Vasicek can churn out an outline faster. Then he can bring in his talented ideas, such as the content of this heart-stopping adventure of The Widow’s Child, to fill out the nitty gritty in record time.

Clearly, it’s not the case that all (or even most) readers feel the same way about AI as you do.

Perhaps Mr. Vasicek might eschew AI-assisted writing, seeking a future of quality over quantity.

Why can’t we have both? Why can’t we have quantity with quality? Why can’t AI make us more creative, instead of replacing our human creativity?

This is all giving me flashbacks to the big debate between tradition vs. indie publishing, back in the early 2010s. Back then, the debate was between purists who said that indie publishing would destroy literature by flooding the market with crappy books. Indies argued that removing the industry middlemen would create a more dynamic market that would give readers more choices and allow more writers to make a living. Both were right to some degree, and both were also wrong about some things. In the end, we reached a middle ground where “hybrid publishing” became the norm.

The same kind of debate is happening right now between human-only purists and AI-assisted writers. The biggest difference is dead internet theory. In the early 2010s, the ratio of bots to humans on the internet was still low enough to allow for a lively debate. Today, there’s so much bot-driven outrage on the internet that most of us are just quietly doing our own thing and avoiding the debate.

That same bot- and algorithm-driven outrage is driving a lot of peole to be irrationally angry or afraid of AI. With that said, I can understand why so many people are upset. And I do think there are a lot of valid criticisms about this new technology, including its environmental impact, copyright considerations, how the models were trained, and the societal impact it’s already starting to have. But if we don’t have an honest and good-faith debate about these issues, we can’t solve any of them. And we can’t have a good-faith debate if one side is coming at it from a place of irrational anger or fear.

In any case, I find it super annoying when readers who clearly found some value or enjoyment in my books turn around and give it a one-star review merely because they don’t like how I used AI. And at the risk of going viral and soliciting more one-star anti-AI reviews, I think its worth voicing my views on the subject and opening that debate. So what are your thoughts on the subject? How do you feel about using AI as a tool to help write books? Can we have quantity with quality? Can AI help us to be more creative, not just more productive? What has been your experience?